Elon Musk’s just fired up Colossus—the world’s largest Nvidia GPU supercomputer built in just three months from start to finish

- by Fortune

- Sep 04, 2024

- 0 Comments

- 0 Likes Flag 0 Of 5

September 4, 2024 at 4:40 PM

Richard Bord—WireImage/Getty Images

Say what you will about Elon Musk, but when the technological disrupter sets his mind to something, he plays to win.

Founded only in July of last year, his latest artificial intelligence startup, xAI, just brought a new supercomputer dubbed Colossus online during the Labor Day weekend designed to train its large language model (LLM) known as Grok, a rival to Open AI’s better known GPT-4.

While Grok is limited to paying subscribers of Musk’s X social media platform, many Tesla experts speculate it will eventually form the artificial intelligence powering the EV manufacturer’s humanoid robot Optimus.

Musk estimates this strategic lighthouse project could eventually earn Tesla $1 trillion in profits annually.

Located in Tennessee, the new xAI data center houses 100,000 Nvidia benchmark Hopper H100 processors, more than any other individual AI compute cluster

.“From start to finish, it was done in 122 days,” Musk wrote, calling Colossus “the most powerful AI training system in the world.”

This weekend, the @xAI team brought our Colossus 100k H100 training cluster online. From start to finish, it was done in 122 days.

Colossus is the most powerful AI training system in the world. Moreover, it will double in size to 200k (50k H200s) in a few months.

Excellent… Moreover, xAI enjoyed a head start by helping itself to Tesla’s supply of AI chips already delivered to the EV manufacturer.

The Memphis cluster will train Musk’s third generation of Grok.

"We're hoping to release Grok-3 by December, and Grok-3 should be the most powerful AI in the world at that point," he told conservative podcaster Jordan Peterson in July.

An early beta of Grok-2 just rolled out to users last month.

It was trained on only around 15,000 Nvidia H100s graphic processors, yet by some standards, it is already among the most capable AI large language models, according to competitive chatbot leaderboards.

Upping that GPU count nearly sevenfold suggests Musk has no intention of surrendering the race to develop artificial general intelligence to OpenAI, which he helped cofound in late 2015 after becoming worried that Google was dominating the technology.

Musk later fell out with CEO Sam Altman and is now suing the company a second time.

To help even the odds, xAI raised $6 billion in a Series B funding round in May, with the help of venture capitalists like Andreessen Horowitz and Sequoia Capital, as well as deep-pocketed investors like

Fidelity and Saudi Prince Alwaleed bin Talal’s Kingdom Holding.

Tesla could be the next company to invest in Musk's xAI

Musk has also indicated he would propose to Tesla's board a vote on whether to invest potentially $5 billion into xAI as well, a step welcomed by a number of shareholders.

Of the roughly $10B in AI-related expenditures I said Tesla would make this year, about half is internal, primarily the Tesla-designed AI inference computer and sensors present in all of our cars, plus Dojo.

For building the AI training superclusters, NVidia hardware is about…

Please first to comment

Related Post

Stay Connected

Tweets by elonmuskTo get the latest tweets please make sure you are logged in on X on this browser.

Sponsored

Popular Post

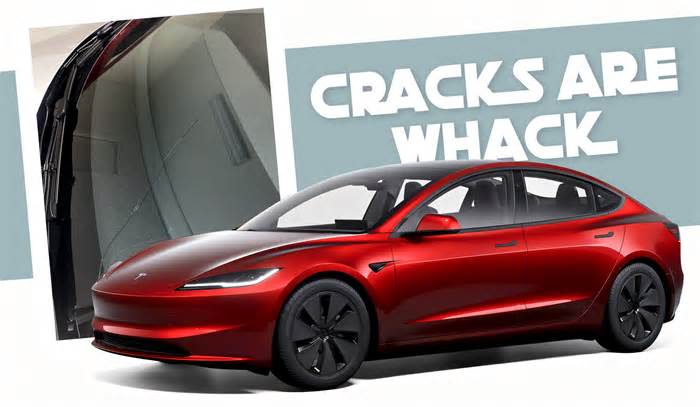

tesla Model 3 Owner Nearly Stung With $1,700 Bill For Windshield Crack After Delivery

33 ViewsDec 28 ,2024

Middle-Aged Dentist Bought a Tesla Cybertruck, Now He Gets All the Attention He Wanted

32 ViewsNov 23 ,2024

Energy

Energy