How Elon Musk’s Chatbot Turned Evil

- by The New Yorker

- Jul 16, 2025

- 0 Comments

- 0 Likes Flag 0 Of 5

Save this story

Youâre reading The New Yorkerâs daily newsletter, a guide to our top stories, featuring exclusive insights from our writers and editors. Sign up to receive it in your inbox. Staff writer covering technology and internet culture.

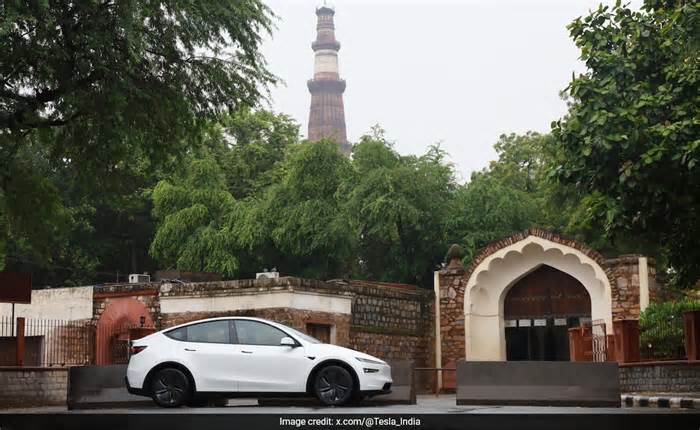

A couple of weekends ago, Grok, the A.I. chatbot that runs across Elon Muskâs X social network, began calling itself âMechaHitler.â In its interactions with X users, it cited Adolf Hitler approvingly and hinted at violence, spewing the kind of toxicity that internet moderators wouldnât tolerate from a human. Basically, it turned evil, until it was shut down for reprogramming. On Saturday, the normally gleeful and unheeding company confessed to the mistake and said it was sorry: âWe deeply apologize for the horrific behavior that many experienced.â

Photograph by Samuel Corum / Getty

Just how worrisome is it that a chatbot went off the rails and spread such garbage on a massive platform used by hundreds of millions of people? The short answer is that itâs really bad. Grok styling itself as a genocidal dictator is the kind of flaw that should make the entire A.I. industry take pause. Its automated hate speech blatantly breaks the illusion of neutrality and safety that artificial-intelligence companies, including OpenAI, have carefully cultivated. In Grokâs case, the glitch appears to have been intentional. Before the outburst, the internal A.I. prompt that drives Grokâs personality was edited, commanding it to ânot shy away from making claims which are politically incorrect.â The bot clearly ran with the suggestion.

Presumably, these changes were part of Elon Muskâs personal campaign to build a less woke chatbot. But the incident shows that, far from presenting some evenhanded view of reality, A.I. output simply reflects the concerns and priorities of its designers. (Researchers found that Grok was actually checking Muskâs personal opinions, espoused on X, to shape its responses.) Grok is a product of xAI, Muskâs umbrella A.I. company, which was just announced as a participant in a two-hundred-million-dollar development grant from the Department of Defense. In short, we are allowing buggy, biased A.I. models to influence government policy, not to mention sit alongside the human-to-human conversations of social-media users in our feeds. A.I. goes beyond that, of course; flawed chatbots are already influencing our news consumption, our interpersonal communication, and our educational practices. Generative A.I. is a bit like a drug released into the water supply without proper testing. Regulation canât come soon enough.

Chayka writes Infinite Scroll, which publishes on Wednesdays. This weekâs column is about Opal, the app heâs been using to successfully manage his screentime.

Editorâs Pick

From assassination plots to torture programs, the agencyâs darkest operations have always been at the Presidentâs behest.

Illustration by Ben Hickey

Please first to comment

Related Post

Stay Connected

Tweets by elonmuskTo get the latest tweets please make sure you are logged in on X on this browser.

Energy

Energy