Elon Musk’s AI chatbot Grok says it generated sexual images of minors due to ‘lapses in safeguards’

- by Post

- Jan 02, 2026

- 0 Comments

- 0 Likes Flag 0 Of 5

Saks owner races to raise $1B in financing as CEO steps down, possible bankruptcy looms: sources

Internet Watch Foundation, a nonprofit that aims to eliminate CSAM online, said the use of AI tools to digitally remove clothing from children and create sexual images has progressed at a “frightening” rate.

In the first six months of 2025, there has been a 400% increase in such material, the nonprofit said.

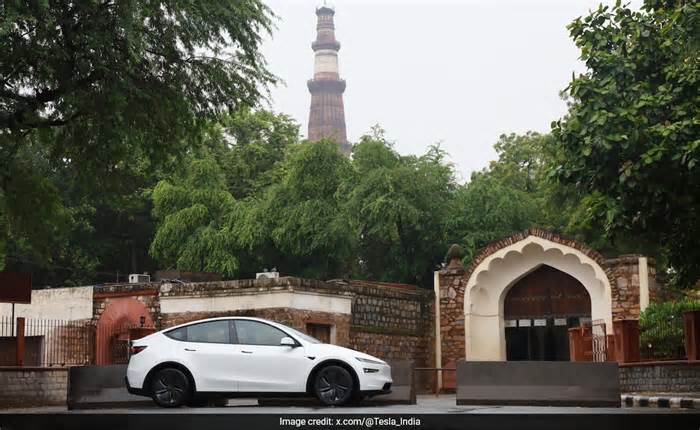

Musk’s AI firm has tried to position Grok as a more explicit platform, last year introducing “Spicy Mode,” which allows partial adult nudity and sexually suggestive content.

It does not allow pornography including real people’s likenesses or sexual content involving minors.

Tech firms have sought to assuage the public with promises of stringent safety guardrails as they ramp up their AI efforts – but these content blocks can often easily be evaded.

Those posts – which violated Grok’s own acceptable use policy through the sexualization of children – have since been deleted, according to the chatbot.

Mojahid Mottakin – stock.adobe.com

And in 2023, researchers found more than a thousand images of CSAM in a massive public dataset used to train top AI image generators.

Some platforms have faced heated backlash over their safety guardrails, or lack thereof.

In its terms of service, Meta bans the use of AI in any way that violates any law related to child sexual abuse materials. The company – which owns Facebook, Instagram and WhatsApp – also recently strengthened its teen safety policies.

Please first to comment

Related Post

Stay Connected

Tweets by elonmuskTo get the latest tweets please make sure you are logged in on X on this browser.

Energy

Energy